The configuration setting cost threshold for parallelism has a default value of 5. As a default value, it’s probably too low and should be raised. But what benefit are we hoping for? And how can we measure it?

The database that I work with is a busy OLTP system with lots of very frequent, very inexpensive queries and so I don’t like to see any query that needs to go parallel.

What I’d like to do is raise the configuration cost threshold to something larger and look at the queries that have gone from multi-threaded to single-threaded. I want to see that these queries become cheaper on average. By cheaper I mean consume less cpu. I expect the average duration of these queries to increase.

How do I find these queries? I can look in the cache. The view sys.dm_exec_query_stats can tell me if a query plan is parallel, and I can look into the plans for the estimated cost. In my case, I have relatively few parallel queries. Only about 300 which means the xml parsing piece of this query runs pretty quickly.

Measure the Cost of Parallel Queries

WITH XMLNAMESPACES (DEFAULT 'http://schemas.microsoft.com/sqlserver/2004/07/showplan') SELECT sql_text.[text] as sqltext, qp.query_plan, xml_values.subtree_cost as estimated_query_cost_in_query_bucks, qs.last_dop, CAST( qs.total_worker_time / (qs.execution_count + 0.0) as money ) as average_query_cpu_in_microseconds, qs.total_worker_time, qs.execution_count, qs.query_hash, qs.query_plan_hash, qs.plan_handle, qs.sql_handle FROM sys.dm_exec_query_stats qs CROSS APPLY sys.dm_exec_sql_text(qs.sql_handle) st CROSS APPLY sys.dm_exec_query_plan (qs.plan_handle) qp CROSS APPLY ( SELECT SUBSTRING(st.[text],(qs.statement_start_offset + 2) / 2, (CASE WHEN qs.statement_end_offset = -1 THEN LEN(CONVERT(NVARCHAR(MAX),st.[text])) * 2 ELSE qs.statement_end_offset + 2 END - qs.statement_start_offset) / 2) ) as sql_text([text]) OUTER APPLY ( SELECT n.c.value('@QueryHash', 'NVARCHAR(30)') as query_hash, n.c.value('@StatementSubTreeCost', 'FLOAT') as subtree_cost FROM qp.query_plan.nodes('//StmtSimple') as n(c) ) xml_values WHERE qs.last_dop > 1 AND sys.fn_varbintohexstr(qs.query_hash) = xml_values.query_hash AND execution_count > 10 ORDER BY xml_values.subtree_cost OPTION (RECOMPILE); |

What Next?

Keep track of the queries you see whose estimated subtree cost is below the new threshold you’re considering. Especially keep track of the query_hash and the average_query_cpu_in_microseconds.

Then make the change and compare the average_query_cpu_in_microseconds before and after. Remember to use the sql_hash as the key because the plan_hash will have changed.

Here’s the query modified to return the “after” results:

Measure the Cost of Those Queries After Config Change

WITH XMLNAMESPACES (DEFAULT 'http://schemas.microsoft.com/sqlserver/2004/07/showplan') SELECT sql_text.[text] as sqltext, qp.query_plan, xml_values.subtree_cost as estimated_query_cost_in_query_bucks, qs.last_dop, CAST( qs.total_worker_time / (qs.execution_count + 0.0) as money ) as average_query_cpu_in_microseconds, qs.total_worker_time, qs.execution_count, qs.query_hash, qs.query_plan_hash, qs.plan_handle, qs.sql_handle FROM sys.dm_exec_query_stats qs CROSS APPLY sys.dm_exec_sql_text(qs.sql_handle) st CROSS APPLY sys.dm_exec_query_plan (qs.plan_handle) qp CROSS APPLY ( SELECT SUBSTRING(st.[text],(qs.statement_start_offset + 2) / 2, (CASE WHEN qs.statement_end_offset = -1 THEN LEN(CONVERT(NVARCHAR(MAX),st.[text])) * 2 ELSE qs.statement_end_offset + 2 END - qs.statement_start_offset) / 2) ) as sql_text([text]) OUTER APPLY ( SELECT n.c.value('@QueryHash', 'NVARCHAR(30)') as query_hash, n.c.value('@StatementSubTreeCost', 'FLOAT') as subtree_cost FROM qp.query_plan.nodes('//StmtSimple') as n(c) ) xml_values WHERE qs.query_hash in ( /* put the list of sql_handles you saw from before the config change here */ ) AND sys.fn_varbintohexstr(qs.query_hash) = xml_values.query_hash ORDER BY xml_values.subtree_cost OPTION (RECOMPILE); |

What I Found

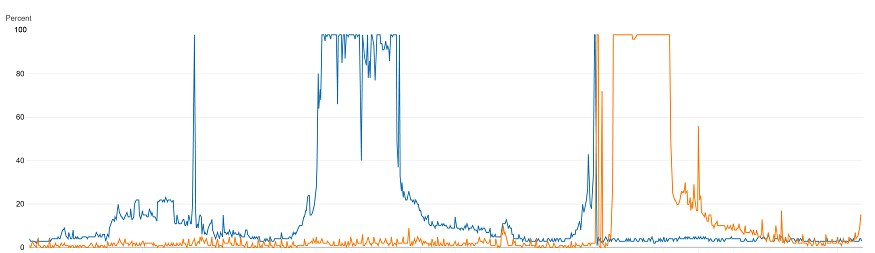

In general, increasing the threshold from 5 –> 50 generally had a very good effect on those queries that went from multithreaded to singlethreaded.

Over half of the queries improved at least 1 order of magnitude (and a couple improved 3 orders of magnitude!) https://t.co/iELhiSr7On pic.twitter.com/W4Mv7hGVlz— Michael J Swart (@MJSwart) January 19, 2022