This series includes a number of stand-alone posts which can fit together to tell a bigger story

- Part One: Types of Performance Metrics

- Part Two: What’s Going On Inside Tempdb?

- Part Three: Avoid Frequent use of TVPs With Wide Rows

- Part Four: Troubleshooting Tempdb, a Case Study

There seem to be two main kinds of performance metrics, ones that measure trouble and ones that measure resources. I’ll call the first kind “alarm” metrics and the other kind “gauge” metrics. Alarm metrics are important, but I value gauge metrics more. Both are essential to an effective monitoring and alerting strategy.

Alarms

Alarms are great for troubleshooting, they indicate that it’s time to react to something. They have names containing words like timeouts, alerts and errors, but also words like waits or queue length. And they tend to be spikey. For example, think about a common alarm metric: SQL Server’s Blocked Process Report (BPR). The report provides (actionable) information, but only after a concurrency issue is detected. Trouble can strike quick and SQL Server can go from generating zero BPR events per second to dozens or hundreds. Alarm metrics look like this:

Alarm Metric

Gauges

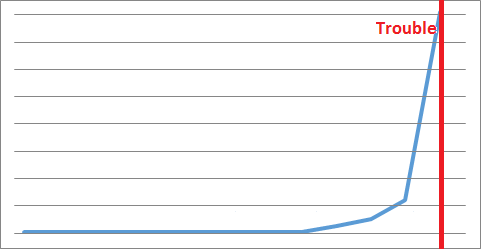

Now contrast that with a gauge metric. Gauge metrics often change value gradually and allow earlier interventions because they provide a larger window of opportunity to make corrections.

If you pick a decent threshold value, then all gauges can generate alerts (just like alarms do!). As they approach trouble, gauges can look like this:

Gauge Metric

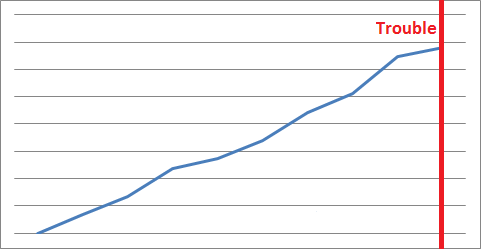

And the best kind of gauge metrics are the kind that have their own natural threshold. Think about measuring the amount of free disk space or available memory. Trouble occurs when those values hit zero and those guages look like this:

Decreasing Gauge Metric

Examples

I compare different gauges and alarms to further explain what I mean.

| Alarms | Gauges |

|---|---|

| Avg. Disks Read Queue Length | Disk Reads/sec |

| Processor Queue Length | % Processor Time |

| Buffer Cache Hit Ratio | Page lookups/sec |

| “You are running low on disk space” | “10.3 GB free of 119 GB” |

| Number of shoppers waiting at checkout | Number of shoppers arriving per hour |

| Number of cars travelling slower than speed limit | Number of cars per hour |

| Number of rings of power tossed into mount doom | Ring distance to mount doom |

Hat tip to Daryl McMillan. Our conversations led directly to this post.

[…] Types of Performance Metrics, Michael J. Swart […]

Pingback by Visual Studio 2015 IntelliTest - The Daily Six Pack — August 5, 2015 @ 6:07 pm

For some of them (both alarm & gauge) keeping a history is important too. It tells you HOW to react. For example the disk free tells you how much space you should add so you don’t run into the same problem next week. Or is this a temporary memory spike or has it been gradually increasing and I need to add more.

Great post! Thanks!

Comment by Kenneth Fisher — August 6, 2015 @ 2:09 pm

Good point!

You point out the value of information that is comprehensive and actionable.

I was focusing on earlier information.

Both are important for effective avoidance of trouble.

Comment by Michael J. Swart — August 6, 2015 @ 2:26 pm

[…] Types of Performance Metrics – Michael J. Swart (Blog|Twitter) […]

Pingback by (SFTW) SQL Server Links 07/08/15 - John Sansom — August 7, 2015 @ 3:01 am

[…] was looking through the news this week and found a piece by Michael J. Swart that I really enjoyed. It talks about different types of metrics that he has used when monitoring […]

Pingback by Gauges and Alarms | Voice of the DBA — August 8, 2015 @ 12:14 pm

[…] is to make use of the blocked process report. The problem is that blocked process reports are an alarm metric, not a guage metric. In other words, the blocked process report can indicate when there is a […]

Pingback by Future Proofing for Concurrency; Blocked Process Reports Are Not Enough | Michael J. Swart — February 17, 2016 @ 8:01 am