This series includes a number of stand-alone posts which can fit together to tell a bigger story

- Part One: Types of Performance Metrics

- Part Two: What’s Going On Inside Tempdb?

- Part Three: Avoid Frequent use of TVPs With Wide Rows

- Part Four: Troubleshooting Tempdb, a Case Study

Let’s say there’s a procedure that uses a table-valued parameter (TVP), and that TVP type has a large maximum width. Something like:

-- Create a message table type CREATE TYPE MessageTableType AS TABLE ( Message VARCHAR(MAX) ); |

Then each time the procedure is executed, SQL Server will pre-allocate six extents (48 pages) of space in tempdb whether or not it’s needed.

Measuring tempdb Activity

So what’s the problem? Well, if these procedures are called frequently (like hundreds or thousands of times per second), then the extra allocations for wide TVPs can be excessive. These allocations can cause tempdb latch contention.

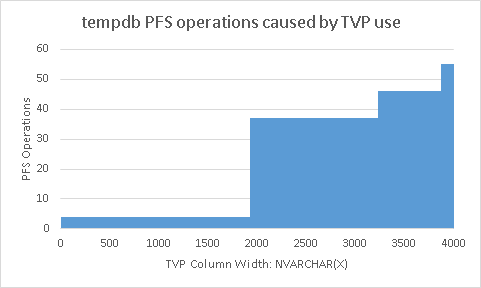

I found that SQL Server will allocate tempdb space based on the width of a TVP table. If a TVP uses a table type that has a NVARCHAR(10) column, then SQL Server doesn’t pre-allocate any space at all. But if the TVP table type has a NVARCHAR(4000) column, then SQL Server will allocate six extents of space. To measure the tempdb activity exactly, I wrote a program which let me generate this:

This chart takes some explaining. But first notice that a single query can cause up to 56 tempdb PFS operations!

Here’s how my program helped me create this chart. It starts by creating a table type. The table type has one column of type NVARCHAR(X). Next, the program executes a simple query that uses a table-valued parameter of that table type. The program measures the number of tempdb allocations and deallocations for various TVP widths: (X ranges from 1 to 4000). Also notice that

- I’m focusing on PFS operations here, but GAM activity shows similar activity

- The x axis corresponds to the maximum width of the column in the table type

- The y axis corresponds to the number of PFS operations found in tempdb. An extent is allocated with one PFS operation, but deallocated one page at a time, this results in nine PFS operations per extent.

- The steps correspond to four, five and six extents.

- There is some overhead associated with creating and dropping tempdb objects. But that overhead is avoided with temp table caching. And those operations have been removed from this chart.

- Table types that can be wider than a 8000 bytes such as those with multiple columns or with

NVARCHAR(MAX)columns are treated the same as table types with anNVARCHAR(4000)column; they’re given six extents of space.

What I Think

I’m still making an assumption that transaction log operations on PFS and GAM pages share a one-to-one relationship with latches on those pages. But experimentally, I have seen that skinny TVPs do in fact enjoy much higher throughput than wider TVPs. In general, this is really hard to measure. I’m frustrated that there are no good metrics to help measure this kind of thing.

Six extents for wide TVPs is really excessive and causes unnecessary pressure on tempdb latches when used frequently. I now begin to worry about the width of table types and the frequency that they’re used as table-valued parameters. But I don’t think we should have to. Stuff like this should be the concern of the database engine, not the database administrator.

Thanks

While writing this post, I really appreciated the help of Paul White and his post Temporary Table Caching Explained

I also appreciate the huge amount of information on SQL Server internals posted by Paul Randal on his blog. Especially posts like Understanding data vs log usage for spills in tempdb.

Focusing on a single database and procedures (leaving ad hoc queries to the side for the moment). We can find expensive procedures:

Comment by Michael J. Swart — August 31, 2015 @ 10:03 am

To give a sense of how big six extents is, here it is displayed graphically along side the size of the Lord of the Rings books:

Comment by Michael J. Swart — August 31, 2015 @ 1:16 pm

[…] Avoid Frequent use of TVPs With Wide Rows – Michael J. Swart (Blog|Twitter) […]

Pingback by (SFTW) SQL Server Links 04/09/15 - John Sansom — September 4, 2015 @ 3:01 am

[…] w/ @ExcelBIPASS Sept 10 – 12p CDT The default instance has a name Power BI Desktop & Excel Avoid Frequent use of TVPs With Wide Rows SQL 2014 queries under a strobe light Live Query Statistics in SQL 2014, not just SQL 2016 Build […]

Pingback by BPOTW 2015-09-04 | SQL Notes From The Underground — September 4, 2015 @ 6:05 pm